Overview

The Wan AI Video Generator: An In-Depth Look for VideoAny Users

This guide explores the Wan AI video generation model, developed by Alibaba's Tongyi Lab, and its practical applications within a production workflow.

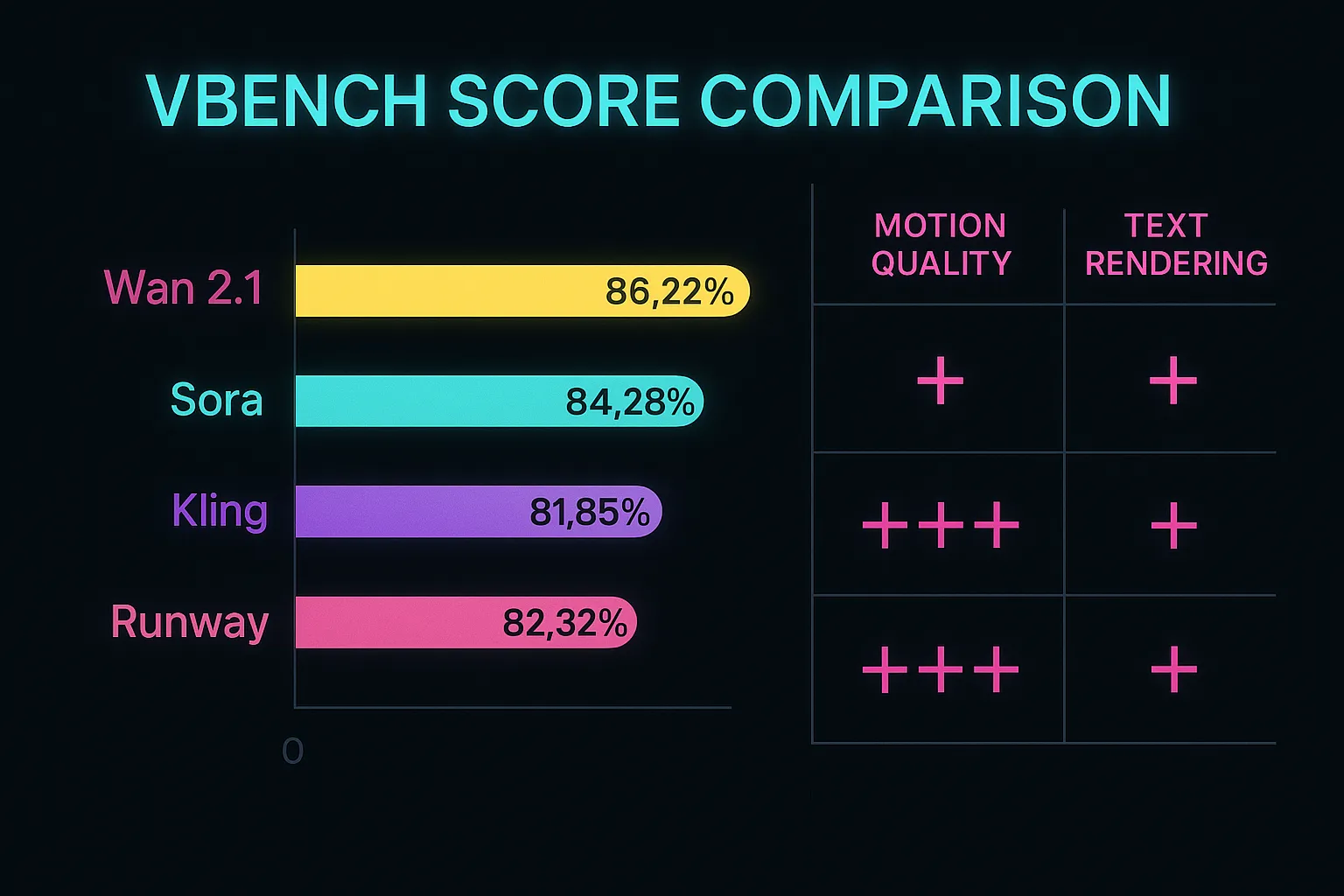

The Wan AI video generator is an open-source solution for creating video from text or images, released under the Apache 2.0 license. It consistently achieves high scores on benchmarks like VBench, often surpassing closed-source alternatives.

This guide covers Wan's core architecture, performance metrics, options for local and cloud deployment, and how it stacks up against other leading models like Sora, Kling, and Runway.

Whether you're looking to integrate Wan into your existing pipeline or understand its capabilities for new projects, this resource provides a comprehensive overview.

What you will learn about Wan AI

- Its foundational architecture and key innovations

- Performance benchmarks and comparisons to other models

- Different versions and their specific capabilities

- Practical deployment options for various use cases

The final copy will be rewritten from source-page headings, paragraphs, and list logic via LLM.

Model Comparison

Wan AI: Key Features and Evolution

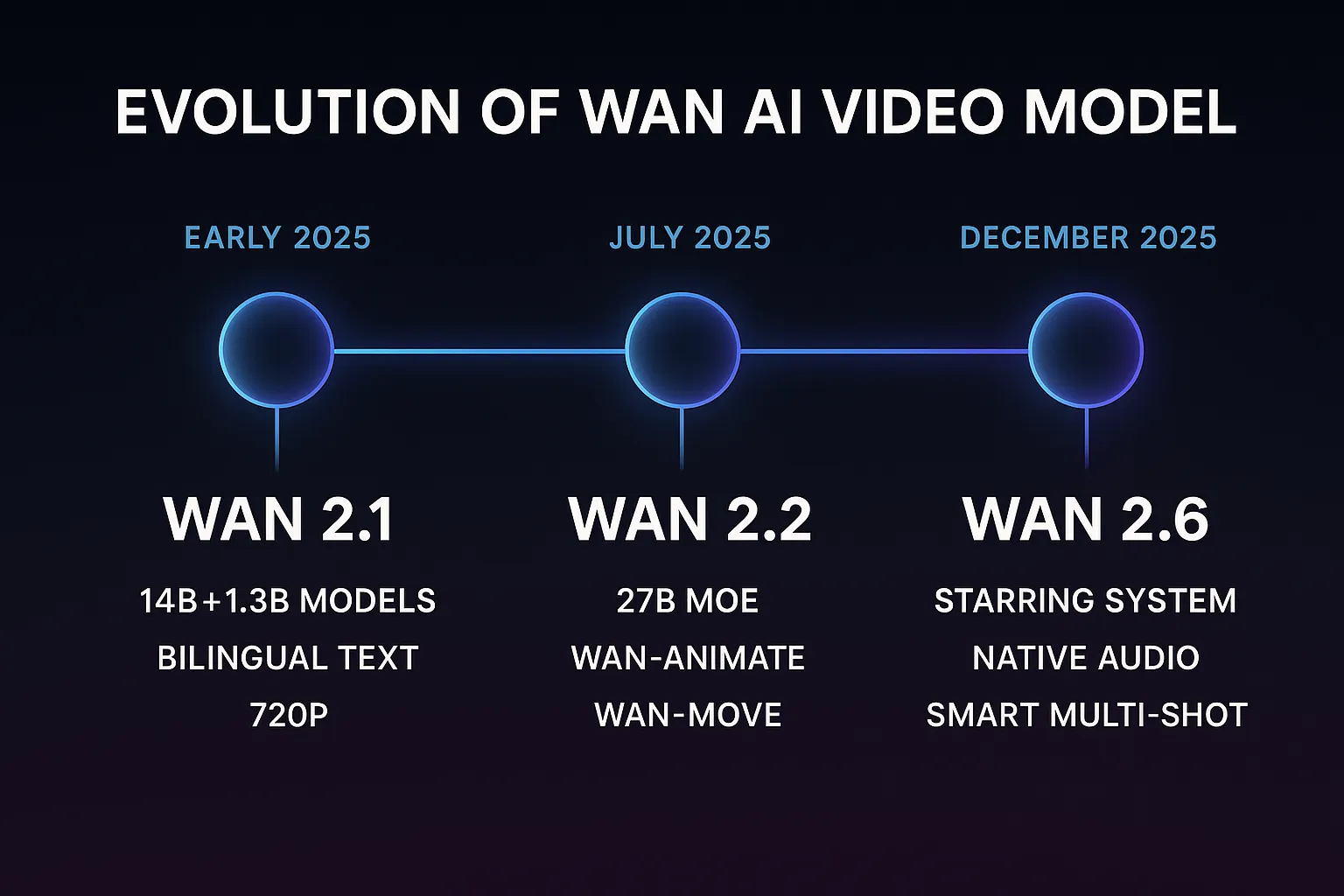

Understand the progression of Wan AI through its major versions and their core enhancements.

| Version | Key Innovations | Capabilities | Focus |

|---|---|---|---|

| Wan 2.1 | Foundational architecture, 3D Causal Wan-VAE | Text-to-Video (T2V), Image-to-Video (I2V), Bilingual prompting (umT5) | Establishing coherent motion and prompt adherence |

| Wan 2.2 | Mixture-of-Experts (MoE) architecture, 27B parameters | Improved inference efficiency, enhanced motion realism and color accuracy | Optimizing performance and output quality |

| Wan 2.6 | Starring System (R2V), Native Audio (V2A), Smart Multi-Shot | Consistent character identity, synchronized audio, narrative storytelling | Advancing towards cinematic, multi-shot narratives |

| VideoAny workflow | Integrated toolchain | Less low-level parameter tuning | Creators shipping fast |

Prioritize the workflow that matches your publishing cadence and quality bar.

Technical Deep Dive

Understanding Wan AI's Core Architecture

Wan AI's superior performance stems from several innovative components that differentiate it from other video generation models.

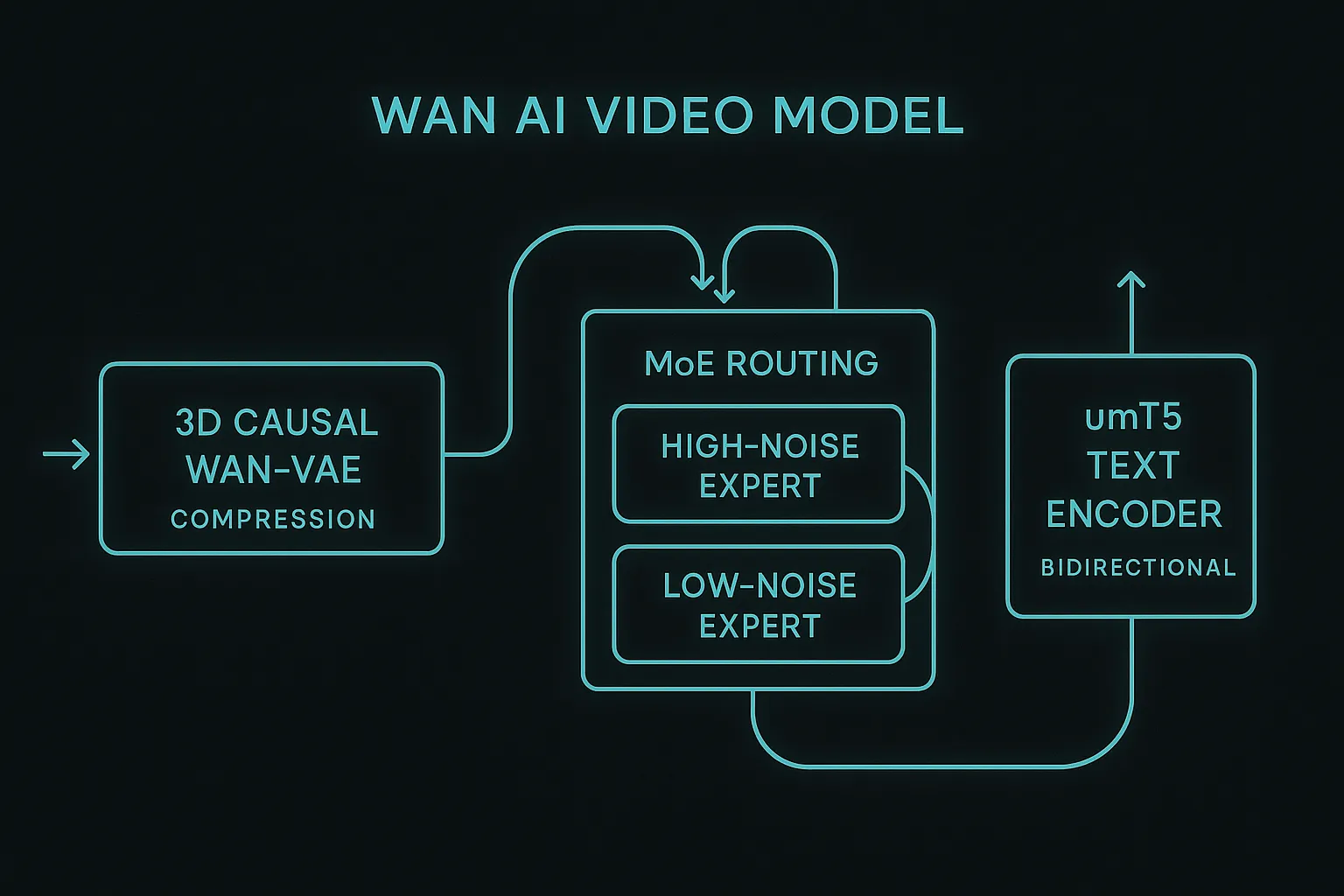

3D Causal Wan-VAE

This custom Variational Autoencoder compresses video frames into a compact latent space, capturing temporal relationships directly.

Why it's innovative

- Processes video across height, width, and time dimensions

- Utilizes a Feature Cache Mechanism to reduce redundant computation by 60%

- Approximately 2.5x faster than VAEs in competing models like HunyuanVideo

- Produces higher-fidelity reconstructions of video content

- Pricing model

- Integrated into the Wan model suite.

- Trade-offs

- Requires understanding of latent space concepts for advanced fine-tuning.

- Best fit

- Achieving high-quality video compression and reconstruction.

Mixture-of-Experts (MoE) Routing

Introduced in Wan 2.2, this architecture routes denoising steps through specialized sub-networks, improving quality and speed.

Why it's innovative

- Activates only 8-10B parameters per step in a 27B model

- Delivers output quality exceeding dense 14B models at similar costs

- Enables graceful quality scaling by adjusting denoising steps

- Features High-Noise and Low-Noise Experts for different stages of generation

- Pricing model

- Part of the open-source model.

- Trade-offs

- Complexity can be higher for initial setup compared to simpler models.

- Best fit

- Balancing high quality with efficient inference, especially for larger models.

umT5 Text Encoder

Wan utilizes a unified multilingual T5 encoder for robust text-to-video generation, offering significant advantages over CLIP-based encoders.

Why it's innovative

- Bidirectional attention for richer semantic representations

- Native bilingual support for English and Chinese without translation artifacts

- Supports long prompts up to 512 tokens for detailed scene descriptions

- Enhances prompt adherence and complex interaction handling

- Pricing model

- Included in the Wan model.

- Trade-offs

- Requires well-structured prompts to fully leverage its capabilities.

- Best fit

- Generating videos from complex, detailed, or multilingual text prompts.

Benchmark Performance

Wan AI consistently outperforms competitors on the VBench benchmark, demonstrating superior motion quality, text rendering, and prompt adherence.

Why it's a leader

- Highest composite score on VBench (86.22% vs. Sora's 84.28%)

- Exceptional temporal coherence with fewer artifacts

- Reliable rendering of readable text within video frames

- Professional colorimetry and natural lighting, rivaling physical cameras

- Pricing model

- Open-source, zero marginal cost for self-hosted use.

- Trade-offs

- Requires hardware investment for local deployment.

- Best fit

- Projects demanding top-tier quality and benchmark-validated performance.

Deployment Options

Running Wan AI: Local vs. Cloud Solutions

Wan AI offers flexible deployment options, catering to both individual creators and production studios.

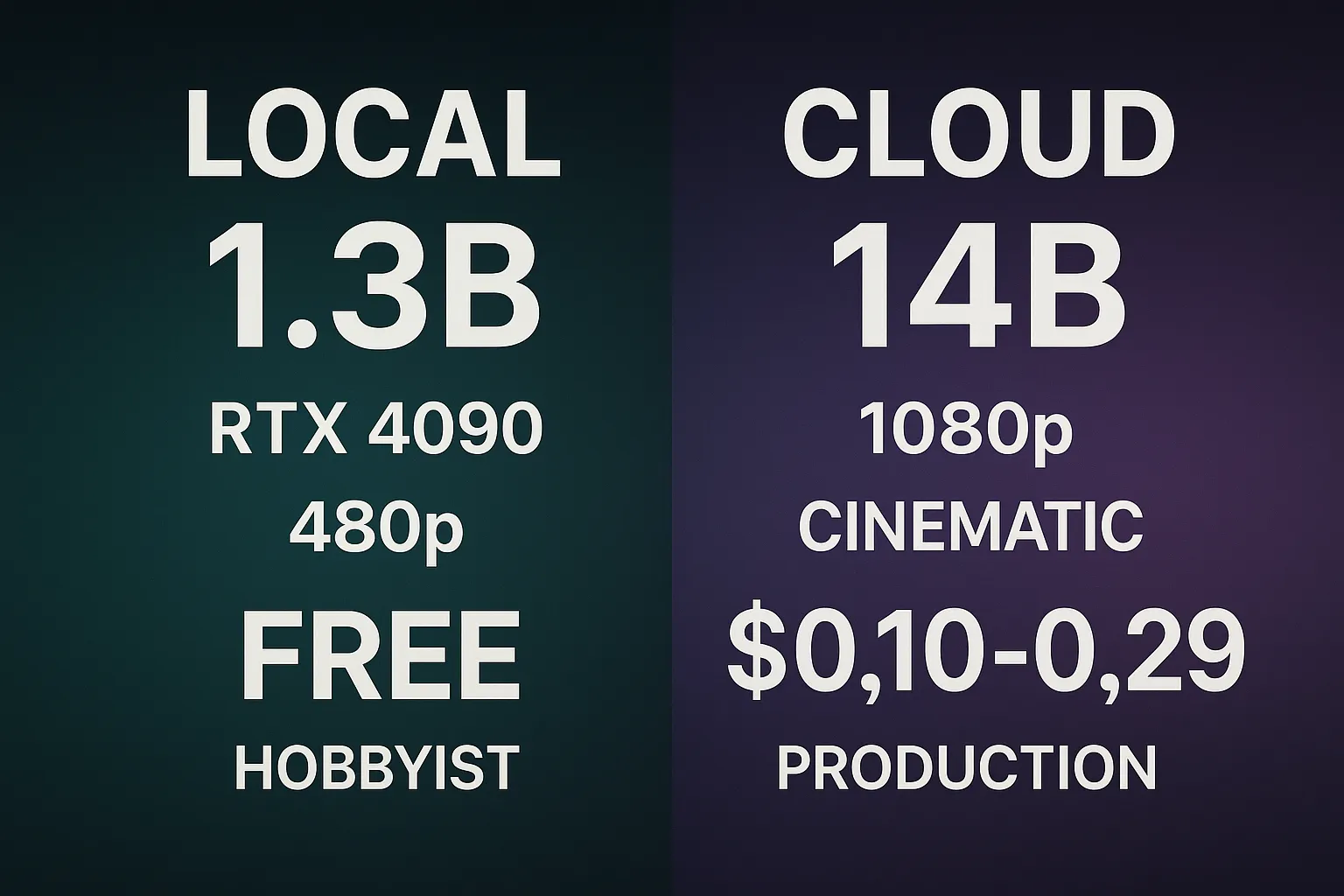

The dual-model approach of Wan AI provides versatility: a lightweight 1.3-billion-parameter model for consumer hardware and a full 14-billion-parameter model for cinematic quality via cloud APIs.

This flexibility allows creators to experiment locally and scale to production-grade output as needed, optimizing for both cost and quality.

Understanding the requirements for each setup helps in choosing the most suitable environment for your video generation tasks.

Choosing your Wan AI environment

- Local Setup (1.3B Speed Edition): Ideal for rapid prototyping and cost-free iteration on consumer GPUs.

- Cloud API (14B Pro Edition): Best for production-quality output, leveraging powerful cloud infrastructure for cinematic results.

- Hybrid Approach: Prototype locally with the 1.3B model, then generate final outputs via the cloud 14B API to balance cost and quality.

- Apply final polish and publish with variant packaging

Treat this as a repeatable operating procedure, not a one-time experiment.

Local Setup Requirements

Running Wan AI 1.3B Locally

For creators looking to experiment with Wan AI without cloud costs, the 1.3B model is optimized for consumer hardware.

The 1.3B model provides a genuine entry point for generating social media content, especially when combined with basic post-processing.

This setup is perfect for rapid prototyping and iterating on prompts without incurring per-clip generation fees.

Ensure your system meets the minimum specifications for a smooth experience.

Minimum System Specifications for Local Wan AI

- GPU: NVIDIA RTX 4090 (24GB VRAM) or equivalent for optimal performance.

- RAM: 32GB system memory to handle model weights and operations.

- Storage: Approximately 15GB for model weights.

- OS: Linux (recommended) or Windows with WSL2 for compatibility and performance.

Production quality depends more on review discipline than on one lucky generation.

FAQ

Frequently Asked Questions about Wan AI

What is the Wan AI video generator?

The Wan AI video generator is an open-source text-to-video and image-to-video model developed by Alibaba's Tongyi Lab, known for its high performance and commercial-friendly Apache 2.0 license.

Is Wan AI video generator free to use?

Yes, it is released under the Apache 2.0 license, making it completely free for commercial use. You can download, modify, and deploy it without royalty fees.

How to run Wan AI video generator locally?

You can run the lightweight 1.3B parameter model locally on consumer GPUs (e.g., NVIDIA RTX 4090 with 24GB VRAM). This is ideal for rapid prototyping and cost-free generation.

What's the difference between Wan 2.1, 2.2, and 2.6?

Wan 2.1 established the foundation with basic T2V/I2V. Wan 2.2 introduced the efficient Mixture-of-Experts (MoE) architecture. Wan 2.6 focused on narrative storytelling with features like consistent character identity, native audio, and multi-shot generation.

Is Wan AI open source?

Yes, the entire Wan model suite, including weights, training code, and inference scripts, is publicly available under the Apache 2.0 license.

Conclusion

Integrating Wan AI into Your VideoAny Workflow

Wan AI offers a powerful, flexible, and open-source solution for video generation, making it a strong contender for creators and developers.

Its continuous evolution, from foundational capabilities in Wan 2.1 to advanced narrative features in Wan 2.6, demonstrates a commitment to pushing the boundaries of AI video.

The choice between local and cloud deployment, coupled with its superior benchmark performance and commercial-friendly license, positions Wan AI as a valuable tool in any creative pipeline.

By leveraging Wan AI, you can achieve high-quality, consistent video outputs, whether for rapid prototyping or full-scale production.

Key takeaways for VideoAny users

- Wan AI provides a robust, open-source alternative to closed-source models.

- Its architectural innovations lead to superior performance and efficiency.

- Flexible deployment options cater to diverse hardware and budget constraints.

- The model's evolution focuses on enhancing both quality and narrative capabilities.

Consistent production systems outperform one-off prompt experiments over time.

Start Building

Launch this workflow on VideoAny

Open the studio, apply the workflow from this guide, and publish faster with fewer retries.

- Generate and refine in one browser workflow

- Keep output quality consistent across batches

- Scale from test runs to production volume